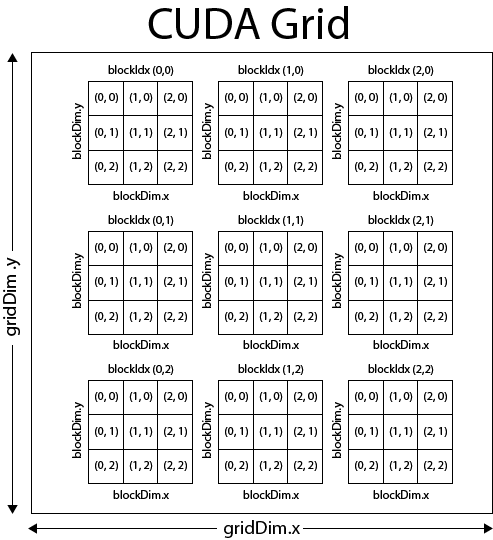

The Hemi library provides a `grid_stride_range()` helper that makes this trivial using C++11 range-based for loops. In fact we can pretty easily write a version of the kernel that compiles and runs either as a parallel CUDA kernel on the GPU or as a sequential loop on the CPU. Portability and readability.The grid-stride loop code is more like the original sequential loop code than the monolithic kernel code, making it clearer for other users.Serializing the computation also allows you to eliminate numerical variations caused by changes in the order of operations from run to run, helping you to verify that your numerics are correct before tuning the parallel version. This makes it easier to emulate a serial host implementation to validate results, and it can make printf debugging easier by serializing the print order. PyTorchs tensor and variable interface is generated automatically from the ATen library, so we can more or less translate our Python implementation 1:1. To efficiently parallelize this, we need to launch enough threads to fully utilize the GPU. Here’s the basic sequential implementation, which uses a for loop. As an example, let’s use our old friend SAXPY. I would greatly appreciate if someone test this again for a more accurate piece of information.One of the most common tasks in CUDA programming is to parallelize a loop using a kernel. So the ability to perform fast matrix multiplication is really important. Hurray Solution to many problems in CS is formulated with Matrices. To my understanding it's more accurate to say: func1 is executed at least for the first 512 threads.īefore I edited this answer (back in 2010) I measured 14x8x32 threads were synchronized using _syncthreads. dim3 is a CUDA Fortran provided data type that has 3 dimensions, in this case we are dealing with a one dimensional block and grid so we specify a dimensionality of 1 for the other two dimensions. In this tutorial, we will tackle a well-suited problem for Parallel Programming and quite a useful one, unlike the previous one :P. CUDA: A General-Purpose Parallel Computing Platform and Programming Model 1.3. I'm not sure about the exact number of threads that _syncthreads can synchronize, since you can create a block with more than 512 threads and let the warp handle the scheduling. Thread ID (132)+ (13)+2 6+3+2 11 Here (132) count threads in block 0 (13) count thread (0,0), (1,0) and (2,0) in block 1 Then add the threadIdx.x of the particular thread. The programming guide to the CUDA model and interface. The main point is _syncthreads is a block-wide operation and it does not synchronize all threads. func2 is executed for the remaining threads.func1 is executed for the remaining threads.func2 is executed for the first 512 threads.func1 is executed for the first 512 threads.However this really depends the most on the application you are writing. blocks can be organized into a 1D, 2D, 3D grid of blocks. CUDA Thread Organization In general use, grids tend to be two dimensional, while blocks are three dimensional.

Then the kernel must run twice and the order of execution will be: CUDA kernels are executed by many different threads in parallel. If you execute the following with 600 threads: func1() If the type is one-dimensional structure, the values of the two dimensions y and z are both 1, except for x.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed